One of the most well-known tradeoffs in materials science is the strength-ductility tradeoff. Improving the strength of a material usually reduces the ductility (i.e., the ability to deform without fracturing) of the material. In such a case there is no single optimal material, but depending on the application one has to make a tradeoff between strength and ductility.

How can we deal with this problem of having multiple objectives in a materials discovery setting? Since we do not know a priori what the best tradeoff is between these objectives (e.g., strength or ductility), the best we can do is to provide the set of all possible optimal tradeoffs; e.g., for a given strength what is the best ductility we can obtain. This is known as the Pareto set. Once we have this Pareto set, we can provide for a given application the material that has the optimal trade-off.

If we have a way to enumerate all possible materials, we could perform experiments on all of them and in this way identify the Pareto optimal set. However, with efficient computational experiments even in silico this quickly becomes an infeasible task. Therefore, multi-objective optimization is an important challenge in materials design.

Can we do better? The first step one would take is a careful experimental design to minimize the number of experiments needed to explore design space. A conventional experimental design, however, does not leverage patterns one might find in the experiments that were already performed. Using active machine learning, we can learn from the experiments that have been done and use this knowledge to propose the optimal next experiment to efficiently obtain the Pareto set.

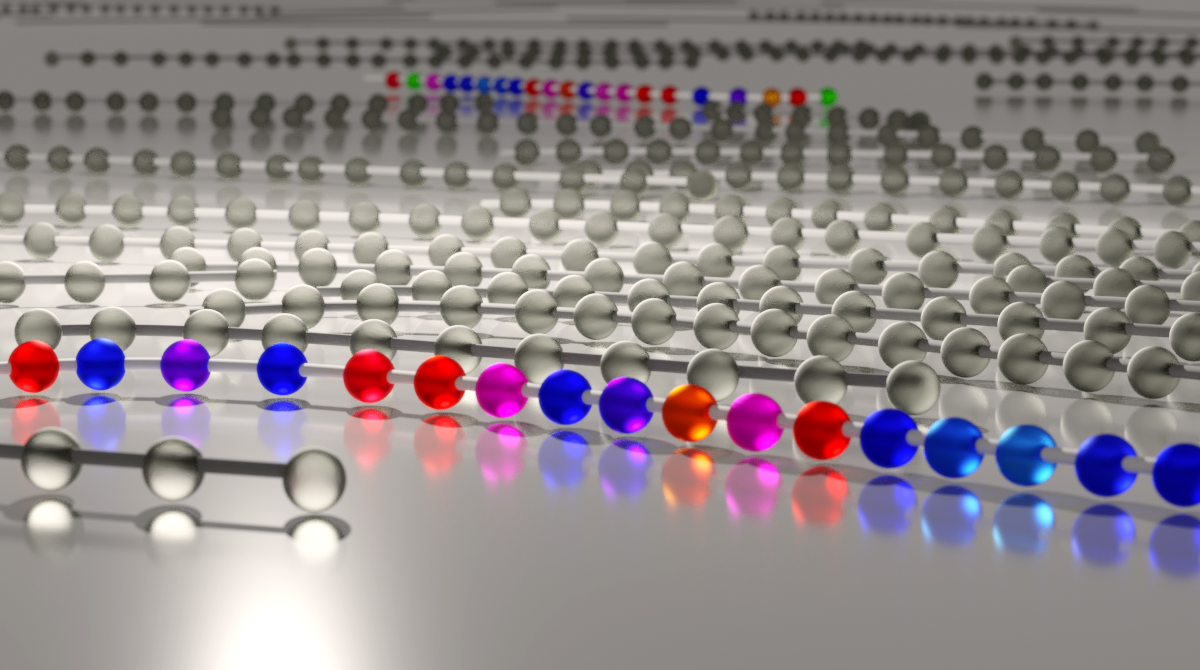

In our work, we focus on finding the Pareto set of model dispersing agents for pigments in coatings and paint applications used to increase color brilliance and stability. In this case, one wants the polymer to bind to the surface of a pigment particle and repel the other pigment particles without making the solution too viscous.

To find good candidates in this design space, we adapted the active learning algorithm from Zuluaga et al. [1] to the materials discovery setting. One crucial difference from this active learning approach to many other Bayesian optimization techniques is that we do not attempt to compare apples with pears. That is, we do not attempt to define one overall acquisition function that needs to balance the different objectives in some (arbitrary) way but instead just use the geometric interpretation of the Pareto dominance relation to identify which materials we can discard with confidence.

In this way, we could classify the relevant polymers in our design space with only a fraction of the experiments that would have been needed in a brute-force or random search.

[1] Zuluaga, M., Krause, A. & Püschel, M. e-PAL: An active learning approach to the multi-objective optimization problem. Journal of Machine Learning Research 17, 1–32 (2016).

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in